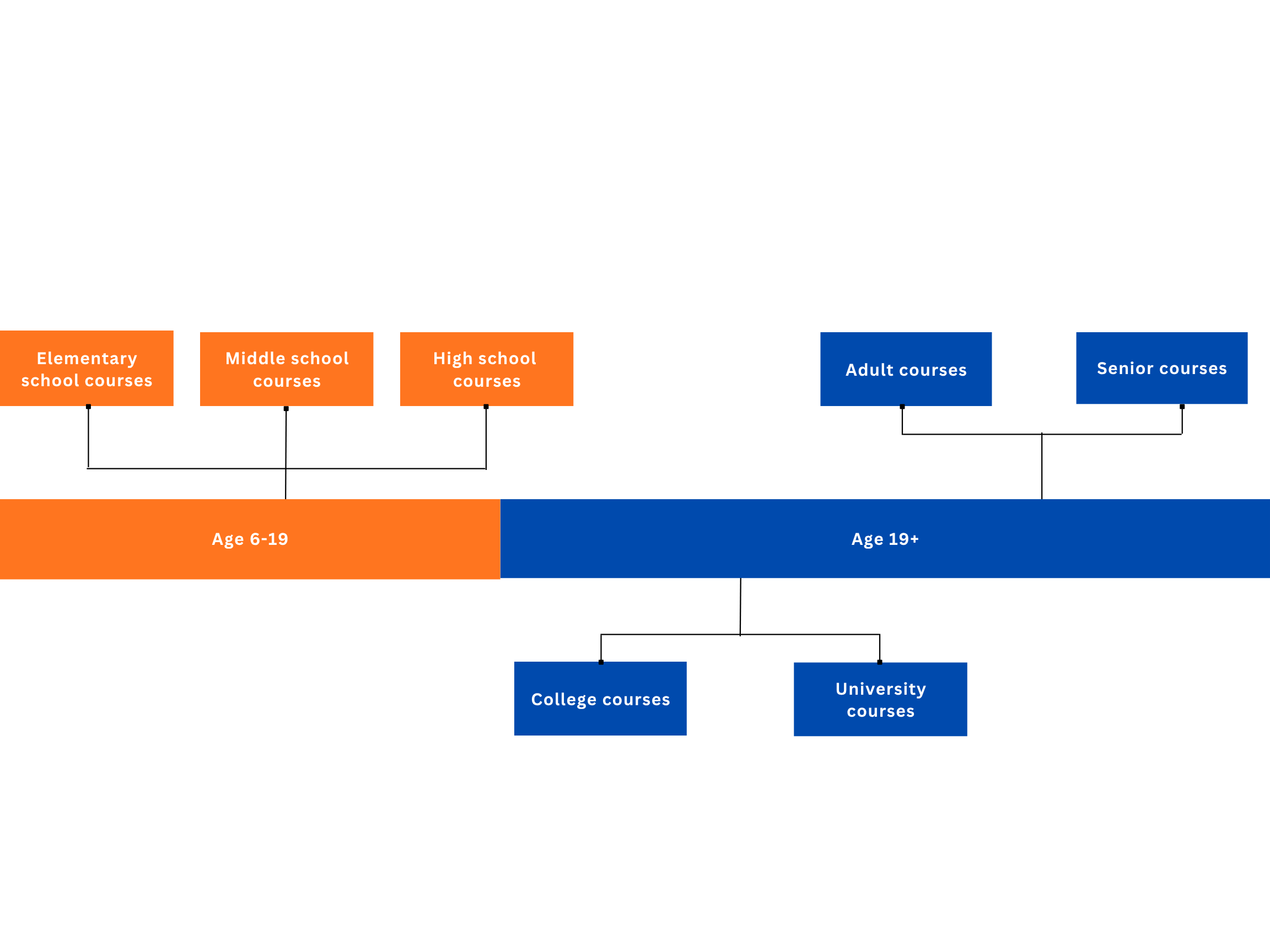

Curriculum Structure

See how the learning model progresses across age groups and learning stages.

Open CurriculumIndigiAI is a structured learning system designed to improve critical AI information evaluation through Indigenous ways of knowing.

See how the learning model progresses across age groups and learning stages.

Open Curriculum

Explore the concept robot designed to learn with students in the classroom.

Meet IWOKA structured educational system.

Maintain and increase human critical evaluation.

Evaluate AI information through Indigenous ways of knowing.

Help society use, trust, question, and create with AI more critically.

Since different age groups learn differently in style, pace, material and format, IndigiAI course have taken full acknowledgement of that and designed every course to perfectly suit level of the target age group.

IndigiAI organizes the classroom around four learning systems. Each system is deliberately broad, because Indigenous pedagogies are not narrow "techniques" - they bundle collaboration, land-based experience, oral practice, guided responsibility, and relational accountability together as a coherent way of learning.

Community situates learning as something shared and accountable. Students learn through collaboration and sustained dialogue, not only individual performance. Talk circles and structured discussion create norms where multiple perspectives are expected, claims can be questioned respectfully, and learners practice verifying information together rather than accepting the first plausible answer, whether that answer comes from a peer or from a machine.

Community learning also reconnects knowledge to trust: who is guiding the inquiry, what responsibilities learners have to one another, and how shared standards for evidence are built in a classroom. That matters directly for AI literacy, because many AI tools are designed to simulate a private "assistant," which can quietly train habits of isolated, authority-free acceptance unless a community frame is actively rebuilt.

Oral storytelling and oral learning practices carry knowledge with context: motives, morals, consequences, and relationships that written snippets often strip away. In this course, oral work is not "extra engagement" - it is a method for practicing patience, listening, and interpretation before judgment.

Story-centered learning also pairs naturally with learning from Elders and trusted knowledge holders, where guidance is relational and responsibilities are explicit. That provides a clear contrast to AI systems that generate fluent narrative without ethical relationship to the listener or accountability to a community.

Reflection is the bridge between experience and understanding. Students pause through guided prompts, journals, circle protocols, and structured review so that information becomes knowledge, tested against what they observed, what they tried, and what happened in the real world. Reflective learning slows impulsive acceptance and supports relational thinking: how something lands, who it affects, and what obligations follow.

For AI evaluation, reflection is a core skill because models are optimized to sound finished. Reflection trains learners to ask second questions: what is missing, what is assumed, what is unverified, and what would need to be checked with human guidance or real-world comparison.

Engagements emphasize participation and learning through lived context: observation, experience, and land-based learning when appropriate, alongside classroom inquiries tied to real consequences. Students learn by doing, testing ideas, investigating outcomes, and comparing model outputs against reality instead of treating synthetic fluency as sufficient.

This system also foregrounds responsibility and reciprocity: engaging with living systems and human communities as places where knowledge must be practiced carefully. In AI education, that stance is essential, because uncritical use can distribute harms quickly through misinformation, bias-laden outputs, or over-trust in synthetic media unless learners repeatedly reconnect information to impact and accountability.